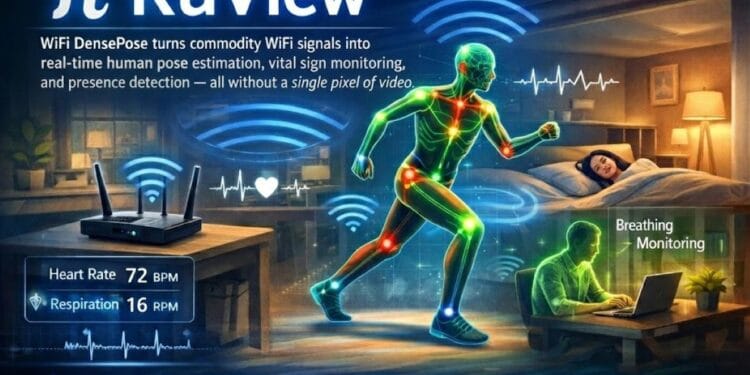

WiFi DensePose tracks humans without any camera, and the idea keeps blowing minds in tech circles right now. Every day, WiFi signals bouncing around your home or office get turned into detailed maps of body positions, breathing patterns, heart rates, and even whether someone stands in the room. No video, no photos, just radio waves doing the work.

A fresh open-source project called RuView, built on earlier research from Carnegie Mellon University, makes this real and ready to run on normal routers. People share clips, demos and worried takes online because it feels like the future arrived overnight with big privacy twists.

The core trick comes from how human bodies mess with WiFi signals. When you move or breathe, your body scatters, reflects and absorbs those invisible waves. Special hardware pulls out channel state information, or CSI, from the router data.

That raw info shows tiny changes, phase shifts and amplitude drops that reveal shape, motion and tiny chest movements. Researchers first cracked dense pose mapping back in 2022 and 2023 with a paper from CMU folks.

They fed CSI into a neural network trained like old-school computer vision models but swapped images for signals. The system learnt to match signal patterns to UV coordinates covering 24 body parts, just like DensePose does on photos. Results matched camera accuracy pretty closely even with people blocked by walls or in groups.

Fast forward to early 2026, and developer Ruvnet took that academic work, built a production-style setup in Rust for speed and dropped it on GitHub. The repo exploded, hitting thousands of stars quickly, with folks calling it trending news, beating out global headlines.

RuView runs edge-side; no cloud is needed. It processes in real time under 50 milliseconds for 30 frames per second pose updates. It spots up to ten people at once, tracks through walls up to five metres deep, picks up breathing between six and thirty breaths per minute, and tracks heart rates from forty to one hundred twenty beats. All contact-free, no wearables. You plug in compatible routers, run the code, and watch skeletons appear on screen from signal data alone.

Imagine the uses. In homes it watches for elderly falls without pointing cameras at bedrooms or bathrooms. Carers get alerts if someone tumbles or stops moving. Hospitals monitor patients overnight, catching irregular breathing or heart skips early.

Search teams in collapsed buildings locate trapped folks by their vital signs under rubble since signals penetrate debris better than light. Smart offices count occupancy guesses if people sit, stand or slump for better energy use or safety checks. Fitness apps track form during workouts, privacy-safe. Security spots intruders by movement patterns without recording faces.

Privacy sits at the heart of why this draws so much attention. Cameras capture everything: your face, clothes, and room details. WiFi sensing skips all that. It sees shapes, motion, and vitals but no identity markers and no visuals stored.

Supporters say it fixes the creep factor in surveillance-heavy setups. Critics point out risks anyway. If someone hacks the system, they map your daily routines, infer sleep patterns, detect arguments from gestures or spot when you leave home.

Bad actors could turn home routers into silent watchers. The tech stays local in RuView designs, but deployment choices matter. Who controls the code? Who accesses outputs? Early adopters test in controlled spots like labs or personal projects while regulators eye rules for wider rollout.

The setup needs commodity gear. Most modern routers work if they expose CSI, though some tweaks help, like Intel cards or specific Atheros chips for better data. You install software, collect signals, train or use pre-built models, and infer poses live.

Visualisers show stick figures or heat maps overlaid on floor plans so you see what the signals reveal. Accuracy hits high in good conditions, ninety-plus per cent matching cameras in tests, though walls, clutter or too many bodies drop it a bit. Still impressive for zero visual input.

This builds on years of WiFi sensing work. Older tricks detected presence or basic gestures, like waving. Dense versions add fine detail, full-body mapping, and vitals. CMU’s paper proved the concept comparable to image methods. Open implementations like RuView make it hackable and runnable today.

Communities on GitHub and Reddit swap tips, pull requests and fixes. One guy joked his agents now see through walls after plugging them in. Others worry about dystopian home spying. Balanced views say potential shines in healthcare rescue and elder care while safeguards stay essential.

As March 2026 rolls on, experiments multiply. Hobbyists set up demos in apartments to track family without creepy cams. Startups eye commercial versions for nursing homes and gyms. Researchers push boundaries: multi-room coverage, outdoor use, and better noise handling.

The line between a clever hack and an everyday tool blurs fast. WiFi, once just connected to us, now quietly observes us in new ways. Whether that excites or unsettles depends on who you ask, but the tech works – no pixels required.